We have just put online the positively evaluated artifacts for ISSTA’16. Congrats to the authors!

https://issta2016.cispa.saarland/accepted-artifacts/

We have just put online the positively evaluated artifacts for ISSTA’16. Congrats to the authors!

https://issta2016.cispa.saarland/accepted-artifacts/

Attending ICSE? Then consider coming two days earlier to attend SEsCPS, the 2nd International Workshop on Software Engineering for Smart Cyber-Physical Systems, where I will be giving a keynote on the current state and challenges of CPS security. Abstract:

Attending ICSE? Then consider coming two days earlier to attend SEsCPS, the 2nd International Workshop on Software Engineering for Smart Cyber-Physical Systems, where I will be giving a keynote on the current state and challenges of CPS security. Abstract:

On the evening of June 1st we will be jointly organizing a CTF-style Android Hacking Event. At Fraunhofer SIT & TU Darmstadt the organization is lead by team[SIK], at Paderborn University & Fraunhofer IEM by the Software Engineering Group. As a “local hacker” you will be able to physically attend either event, either at Fraunhofer SIT (Rheinstr.) or at Zukunftsmeile 1 in Paderborn. We will try to have a video feed between the two events.

You can also participate as a remote hacker. Remote participants will be listed separately, as we expect them to be more advanced than the student hackers that we actually target with this event. Prices will only be given out to local student hackers.

To qualify, you must register (and solve a couple of challenges) by May 11th here.

We have put online information about ISSTA’s artifact evaluation. Note that this year you may provide artifacts ahead of time to positively influence the decision of paper acceptance!

Thanks for the positive feedback to my keynote at the Entwicklertag in Frankfurt! Let’s hope that the insights I shared about our BaaS-Analysis will help make the world a bit more secure…

Thanks for the positive feedback to my keynote at the Entwicklertag in Frankfurt! Let’s hope that the insights I shared about our BaaS-Analysis will help make the world a bit more secure…

And thanks a lot to Siegfried, Steven, Robert and Max for the great work! Keep it going!

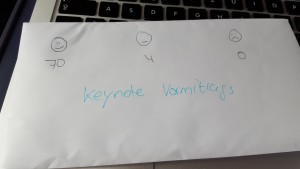

We have just put online information about our two keynote presentations at ESSoS by Karsten Nohl and David Basin. Karsten Nohl will ask the question How much security is too much?, citing some lessons learned from introducing security into a new, large telecommunications startup, while David Basin will elaborate on the quirks of Security Testing and what it actually all means. I am looking forward to two exciting presentations!

As of today, I have joined the editorial board of the IEEE Transactions on Software Engineering (TSE) as an associate editor. I am looking forward to receiving your very best submissions!

I am glad to report that I have just been appointed Program Chair of the 2018 International Symposium of Software Testing and Analysis (ISSTA). ISSTA is the leading research symposium on software testing and analysis, bringing together academics, industrial researchers, and practitioners to exchange new ideas, problems, and experience on how to analyze and test software systems. I wish to thank the organizing chair Frank Tip as well as the entire steering committee for this great honor.

ISSTA 2018 will be co-located with the European Conference on Object-Oriented Programming (ECOOP), in beautiful Amsterdam, Netherlands. Let’s make it a great event!

ICSE is the premier academic conference for Software Engineering. In total, researchers of CYSEC managed to publish at least five ICSE publications this year, two with contributions from SSE:

ICSE is the premier academic conference for Software Engineering. In total, researchers of CYSEC managed to publish at least five ICSE publications this year, two with contributions from SSE:

Recently, our team member Andreas Poller gave an interview at Deutschlandfunk. The radio report shone a light on the reasons why the German Federal Office for Information Security (BSI) asked us to investigate TrueCrypt, how we executed the study, and what common users shall consider when using harddisk encryption.